Deep Learning

Motivation

The universal approximation theorem states single-layer network (provided with sufficient neurons) is a universal approximator for univariate functions only. To capture more complex data, distributions (multivariate functions) we introduce complexity by adding layers (increase the depth of the network).

Overview

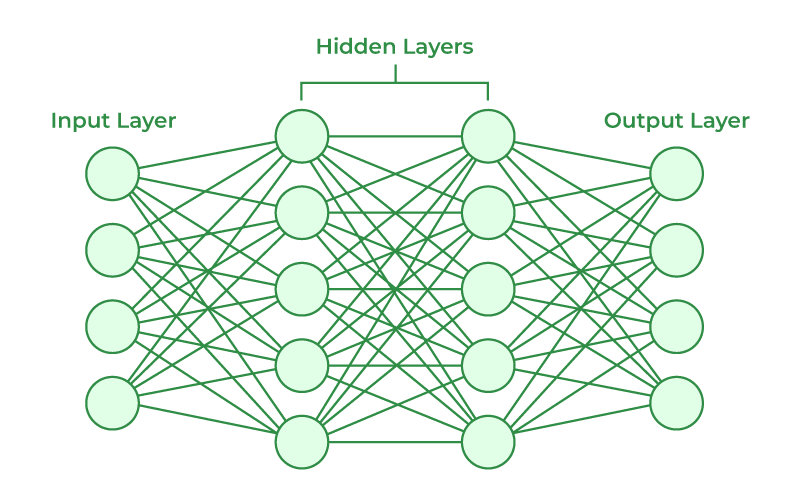

Neurons receive multiple inputs $[x_0, x_1, \dots, x_n]^T$ (or $a^{(l)}_n$ where $l$ is the layer), combine them using some linear function $z$ (e.g., weighted sum in FNNs or CNNs), and apply a non-linear activation function $f$ (aka $g$, $\phi$, or actual function names $\sigma$) to the result, passing on the result as neuron’s output $y$. Moreover, $z$ depends on parameters (vector of weights) $[\theta_1, \theta_2, \dots, \theta_n]^T$ which are fine-tuned through training. Stack of neurons form layers, layers are concentrated to form networks.

\[a^{(l)} = f^{(l)} (W^{(l)} a^{(l-1)} + b^{(l)})\]

Vectors and matrices are used to represent the grouping of linear equations (functions). Lowercase bold symbols represent vectors, uppercase bold symbols represent matrices.

\[\begin{bmatrix} y_1 \\ y_2 \end{bmatrix} = \begin{bmatrix} \theta_{11} & \theta_{12} & \theta_{13} \\ \theta_{21} & \theta_{22} & \theta_{23} \end{bmatrix} \begin{bmatrix} x_1 \\ x_2 \\ x_3 \end{bmatrix} + \begin{bmatrix} \beta_1 \\ \beta_2 \end{bmatrix}\] \[\mathbf{y} = W \mathbf{x} + \mathbf{\beta}\]Feed Forward Networks (FFNs)

Feed Forward Networks are a general category of neural networks where the data flows in one direction ($input \rightarrow hidden \rightarrow output$), with no loops. FFNs perform operations on its elements a few times (layers) and output some data. We can mathematically express a feed-forward pass (forward pass) from the input layer to layer 2. Here the parameters $\theta^{(l)}{ij}$ (weight from neuron $j$ in layer $l$ to neuron $i$ in layer $(l+1)$) of the network control how well the network performs a task. Note that $x_0=1$ is the bias unit (a fixed constant, for clean matrix operations), $\theta{j0}$ are the bias weights; trainable parameters that shift activation thresholds (making the learning more flexible and robust).

\[\mathbf{a}^{(2)} = \begin{pmatrix} a^{(2)}_1 \\ a^{(2)}_2 \\ a^{(2)}_3 \end{pmatrix} = f(\boldsymbol{\theta}^{(1)} \mathbf{a}^{(1)}) = f(\boldsymbol{\theta}^{(1)} \mathbf{x}) = f\!\left( \begin{pmatrix} \theta^{(1)}_{10} & \theta^{(1)}_{11} & \theta^{(1)}_{12} & \theta^{(1)}_{13} \\ \theta^{(1)}_{20} & \theta^{(1)}_{21} & \theta^{(1)}_{22} & \theta^{(1)}_{23} \\ \theta^{(1)}_{30} & \theta^{(1)}_{31} & \theta^{(1)}_{32} & \theta^{(1)}_{33} \end{pmatrix} \begin{pmatrix} x_0 \\ x_1 \\ x_2 \\ x_3 \end{pmatrix} \right)\]The parameters assigned $\theta^{(l)}_{ij}$ are often random at initialisation. We use known information about our data (its inherent structure, or labels associated to it) to modify our parameters $\theta$. We do so by defining a loss function to capture the accuracy of the predictions. We then use local optimisation techniques (based on gradient-descent and its variations) to nudge the parameters in the right direction. The loss function defines a hypersurface (aka solution space, search space or the feasible region) in a multi-dimensional space that has as many dimensions as there are network parameters. We use backpropagation to improve out network parameters $\theta$ by iteratively updating them in a direction that reduces the magnitude of the loss.

Parameters and Hyperparameters

Tasks solved by neural networks are inherently formulated as optimisation problems. In this setting, we need to decide how we will design and train out network. Training a network is finding the optimal parameters $\theta$ of the network according to some measure (e.g., loss function). Network parameters are usually referred to as weights and biases ($\omega$, $\beta$). The weights $\omega$ operate on the data inputs, and the biases $\beta$ are an extra parameter we add to provide the network with extra flexibility. The design/shape/form of a network is known as its architecture. This architecture is mostly controlled by the network hyperparameters. Examples of hyperparameters include; learning rate $\alpha$, batch size, loss type (function), number of layers, type of layers, etc. These are the network settings are not trainable (unlike $\theta$). Two most common ways of fine-tuning hyperparameters are grid search and random search. Hyperparameters are mostly tuned through the validations set.

Activation Functions

An activation function (aka transfer function) $f$ decides whether a neuron should be activated or not (whether its input carries importance for the network). Most simple example would be a binary step function that uses a threshold value to decide if the neuron should be activated (e.g., $0 \ for \ x \leq 0, 1 \ for \ x > 0$). Here, the gradient $\nabla$ of the step function is zero, hindering backpropagation. A linear activation function (also known as no activation) maps the input directly to the output $f(x) = x$. Linear activation functions do not allow the model to create complex mappings between the network’s input and outputs. Non-linear activation functions allow backpropagation (derivative function is now related to input). One of them widely used ones is the sigmoid (logistic) $\sigma$ activation that takes any real value as input and outputs a value in the range of 0 to 1 (normalisation). It is commonly used to predict probability as an output, its differentiable and provides a smooth gradient.

\[\sigma(x) = \frac{1}{1 + e^{-x}}\]Other common non-linear activation functions include, Tanh (an output of -1 to 1 with $\mu = 1$), ReLU (Rectified Linear Unit) where neurons are only activated if $x > 0$, and Leaky ReLU. Both $\sigma$ and $Tanh$ face the problem of vanishing gradients (as the gradient value approaches zero the network ceases to learn). $ReLU$ is computationally more efficient compared to them, it also accelerates the convergence of gradient descent towards the global minimum (of the loss function) due to its linear, non-saturating property. $Leaky ReLU$ is an improved version that fixes the Dying ReLU problem ($x < 0$ output gives a gradient value of zero) and enables backpropagation also for input values.

\[Tanh(x) = \frac{e^x - e^{-x}}{e^x + e^{-x}}\] \[ReLU(x) = \max(0, x)\] \[Leaky ReLU(x) = \max(0.1 * x, x)\]A special case of activation functions are those involving outputs from several neurons in each layer. Examples for this are Softmax ($z_i$ is the input value, $K$ is the total number of classes) and Maxout.

\[\sigma(x_i) = \frac{e^{z_i}}{\sum^K_{j=1} e^{z_j}}\] \[maxout(x_i) = \max_i{x_i}\]Loss Functions

Loss functions (objective functions, criterion) define a quantity that distills the model performance into a single number. We often use a measure of error, and try to minimise it. Two most common loss functions are the mean squared error (MSE) and cross entropy (CE). In MSE, $h_i(x)$ is an element of the output of the network, $y_i$ is an element of the target, $N$ is the total number of elements for this data sample. For example $h_i$ and $y_i$ could be pixels in an image, $N$ could be the total number of pixels in this image.

\[L_{MSE} = \frac{1}{N} \sum^N_i (y_i - h_i(x))^2\]In cross entropy loss (CE), $h_j(x)$ is the probability (e.g., calculated using softmax) of the sample to belong to class $j$, $y_j$ is the real probability of the sample belonging to this class (either 1 or 0), and $M$ is the total number of classes used in this classification problem.

\[L_{CE} = \sum^M_j y_j log(h_j(x))\]It is trivial to see that if we have only two classes (binary classification problem) we can use the binary cross entropy (BCE). Here the cost function will change depending on the value of y (0 or 1). The last network layer of a binary classifier that uses BCE loss is equivalent to a logistic regression. Here the activation of the last layer would be a sigmoid function $\sigma$, which also defines a decision boundary ($P(y = 1 \mid \theta, x) = \sigma(\theta^T x)$ where $\theta^Tx = 0$ would fall on the decision boundary).

\[L_{BCE} = -y log(h(x)) - (1-y) log(1-h(x))\]Gradient Descent and Backpropagation

We use Gradient Descent, a local optimisation technique to provide us with the information of the local curvature of the loss. We update our model by changing the parameters in the steepest direction provided by the gradient expression. For simplicit, if we assume the network is a simple multiplication by the parameters ($\theta x^i$), we can define the parameter optimisation as following:

\[J(\theta) = \frac{1}{2m} \sum^m_{i = 1} (y^i - \theta x^i)^2\] \[\theta_b = \theta_a - \alpha \frac{\partial J(\theta_a)}{\partial \theta}\]In practice our networks may have many more parameters (not just $\theta$) and also have dependencies between them.

Calculating Backpropagation

Backpropagation is a combination of the gradient descent and the derivative chain rule. Consider a very simple network of 4 layers with 1 neuron each $\set{a_1, a_2, a_3, a_4}$. In total we have 6 parameters $\theta$ (that is 3 pairs of $(w_i, b_i)$). From this we would get a sample as the output $(a_4, y)$, denoting the prediction and the target. Similarly, we would have a loss function $C = \frac{1}{2} (a_4 - y)^2$. We would then have to calculate the derivative of this loss function ($J(\theta)$ or $C$) with respect to each of these 6 parameters. For example, given our network outputs:

\(a_2 = g(z_2) \ where \ z_2 = w_1a_1 + b_1\) \(a_3 = g(z_3) \ where \ z_3 = w_2a_2 + b_2\) \(a_4 = g(z_4) \ where \ z_4 = w_3a_3 + b_3\)

Given we can easily find the derivative of our loss function with respect to the final output $a_4$. We can then iteratively calculate the derivative of the loss function (for all layers, weights, and biases: $x \in \set{a, \omega, b}$) using our formula. Ultimately, we are able to calculate the derviative of $C$ according to each of the 6 parameters. The same principles applies to networks with more neurons.

\[\frac{\partial C}{\partial a_4} = \frac{1}{2} 2(a_4 - y) = a_4 - y\] \[\frac{\partial C}{\partial x_{l-1}} = \frac{\partial C}{\partial a_l} \frac{\partial a_l}{\partial z_l} \frac{\partial z_l}{\partial x_{l-1}}\]Batch, Mini-Batch and Stochastic Gradient Descent

In practice, the datasets are compounded of hundreds, thousands or even millions of samples. There are 3 different strategies to combine the data we have, that define what a single iteration would look like. Batch gradient descent uses all the data samples (batch) we have, and for each calculates a gradient. Once we have calculated all the gradients corresponding to all data values, we combine them (e.g., average, sum) and update the model parameters using a learning rate $\alpha$. Stochastic gradient descent uses only one random sample of the data to calculate the gradient and with this gradient we update the model before moving onto the next iteration. Mini-batch gradient descent uses subsets of the data (mini-batches) to compute gradients corresponding to each sample in a subset, the combines them and updates the model parameters. Every time we pass all samples available in our dataset through our network we have performed an epoch. So say our batch size equals our dataset size, then with batch gradient descent we would perform 1 iteration (1 forward pass and 1 backpropagation) per epoch.

Bias, Variance and Regularisers

Bias (underfitting) and variance (overfitting) in neural networks are analogous to their use in polynomial fitting. The capacity of the model is closely related to the model complexity. Increasing the capacity of a model increases how expressive it can be, and how much variance in data it can accommodate. The capacity should be adapted correctly to capture the distribution of the data in the dataset. A natural solution to avoid bias (underfitting) is to increase model capacity. A natural solution to solve overfitting is to use regularisation (reduce variance without increasing bias). Regularisers can be explicit (add terms to the loss function), or implicit (droupout, data augmentation).

Two most common explicit regularisers are the $L_1$ and $L_2$, where in both cases $\lambda$ controls the strength of the regularisation.

\[L_1: J(\theta) = \frac{1}{2m} \big( \sum^m_{i = 1} (y^i - \theta x^i)^2 + \lambda \sum^n_{j=1}\mid \theta_j \mid \big)\] \[L_2: J(\theta) = \frac{1}{2m} \big( \sum^m_{i = 1} (y^i - \theta x^i)^2 + \lambda \sum^n_{j=1} \theta_j^2 \big)\]Three commonly used robust implicit regularisers are dropout, data augmentation, and batch normalisation. Dropout is implemented by defining a parameter $p$ that defines the probability of a neuron in a layer to be dropped (applied only in training). During the inference we scale the outputs of the neurons by a factor $p$ to keep the magnitude of the data consistent as it goes thourgh the network. Data augmentation is a strategy to increase our dataset size by augmenting (rorate, translate, zoom, crop, add noise, change brightness) existing samples into new ones. Batch normalisation normalises (standardises) values at each layer of the network (instead of just on the input layer). This normalisation is controlled by parameters that are also update during training. Some interpret it as a mean of reducing internal covariate shift (changes in distribution of the data as it navigates through the network), while other as a regulariser that smooths the objective function. It reduces the probability that vanishing or exploding gradients occur.

Sequence Models

The importance of RNNs and LSTMs is related to processing of sequential data. In sequential datasets, data points are sequentially dependent on each other (unlike images). Such data can include; text, stock price, sequence of images, sequence of labels, and etc. Recurrent neural networks understand data where there is a dependency between what comes before and after. In general we do 2 things with such data:

- Process and understand relations or causality between elements of the sequence (understand what this sequence means)

- Be able to generate sequential data as output

For example, personalised text generation, sentiment analysis, or language translation.

Text Representation

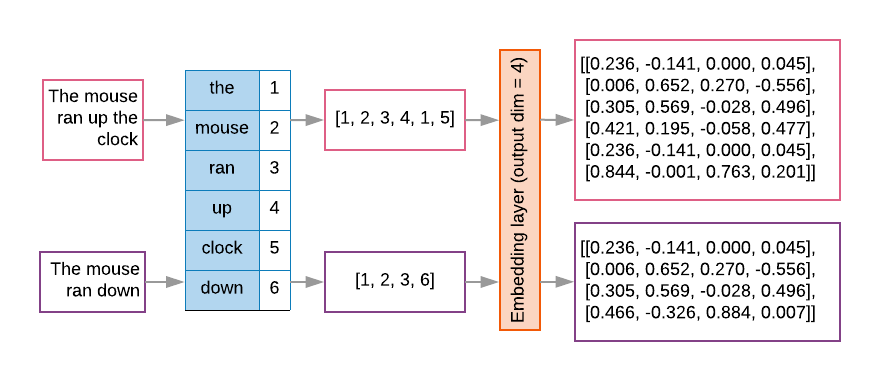

Text is not numerical (unlike images for example). Transforming text datasets into numerical form consists of the steps of tokenisation and encoding. A token is the smallest unit of text we care about. Tokenisation is the process of encoding a string of text into numerical integer IDs ($text \rightarrow tokens \rightarrow token_IDS$):

\["hello \ world!" \rightarrow \set{hello, world, !} \rightarrow \set{7592, 2088, 999}\]Once tokenised, we need to encode tokens into vectors. The two ways to encode tokens are: one-hot encoding and embedding. With one-hot encoding we transform numerical integer IDs (could be word or character based) into vectors of 1s and 0s. We build a mini-vocab from the input tokens, where each token becomes a binary vector, where $len(v) = len(vocab)$ and duplicate words (or characters) share the same vector (but not the position/index).

When we use words instead of characters the dictionary will be huge. We need smaller dense representation vectors. Words have meaning and relations, so we can represent word tokens in a dense vector space. This representation is called word embeddings. Sequence models often have such an embedding layer as their first layer ($\forall{word_index}, index \rightarrow vector$). The dense vector represents that wor’d lcoation in the semantic space.

Often we use embeddings that have already been trained by others. GloVe (Global Vectors for Word Representation) is an unsupervised learning algorithm for obtaining vector representations of words. These embeddings are packaged within torchtext.vocab. For example, we can download the version with 6B tokens and 300 dimensions using GloVe(name='6B', dim=300). This suggets the training corpus GloVe was trained on had 6 bilion tokens of text (from Wikipedia + Gigaword 5), and each embedding contains 300 dimensions. Overall, it contains around ~400,000 unique token embeddings. Most important functions in GloVe are stoi (string to index) and itos (index to string). As part of torchtext.datasets there are also a number of text datasets available to us. For example AG_NEWS contains extracts of news articles labelled according to the news type.

Recurrent Neural Networks (RNNs)

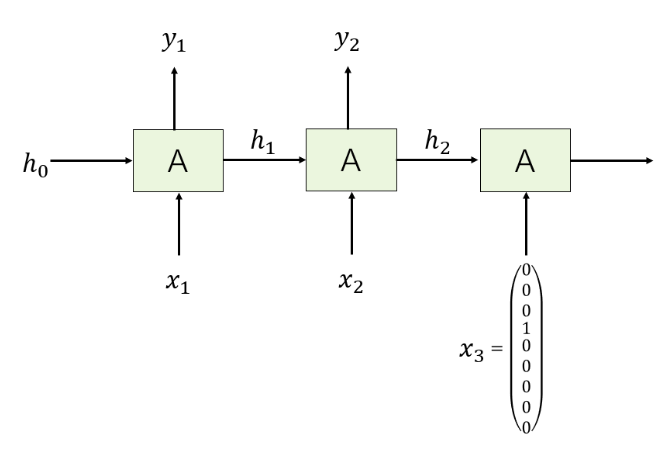

The first type of architecture introduced to deal with sequential data is Recurrent Neural Networks (RNNs). RNNs introduce context in the form of a recurrent loop (outputs returning as inputs for further processing). They take some input $x_t$ ($t$ denoting a specific element of the input sequence $X$), and produce some output $h_t$ (related to a specific point in the sequence through the subscript $t$). At the same time RNN also has a loop that goes over itself and allows introducing the concept of context. The loop represents the fact that RNN is applied multiple times to each element of sequence $x_0, x_1, \dots, x_t$ to generate outputs $h_0, h_1, \dots, h_t$. Here some information is shared from one application to the next, called hidden state.

It is important to note that inputs $x_i \in X$ and outputs $h_i \in H$ in all steps are different to each other, while the RNN itself $A$ remains unchanged. That is, the same set of weights and activations are used to process each element of the sequence. The weights matrices $W_{xh}$ (weights from input), $W_{hh}$ (recurent weights), and $W_{hy}$ (weights from $hidden \rightarrow output$) are identical at every time step, what changes at each step is the input $x_t$ and the hidden state $h_t$. This drastically reduces the number of parameters used. We can define the architecture of RNN, and the processing of first element in the sequence as:

\[h_t = A(h_{t-1}, x_t) = tanh(W_{hh}h_{t-1} + W_{xh}x_t) \\ y_t = W_{hy}h_t\] \[h_0 = [0, 0, 0, 0, 0]^T \\ x_1 = [0, 0, 1, 0, 0, 0, 0, 0, 0]^T\] \[h_1 = A(h_0, x_1) = tanh(W_{hh}h_0 + W_{xh}x_1) \\ y_1 = W_{hy}h_1\]We take the first character $x_1$, combine with current hidden state $h_0$ (its dimensions being a hyperparameter) to derive $h_1$, and calculate the associated output vector $y_1$ (of size equal to vocabulary). Here $W_{hh}$ would be a $5\times5$ matrix, $W_{xh}$ would be a $5\times9$ matrix, and $W_{hy}$ would be a $9\times5$ matrix. The training works through the loss function.

\[Given \ y_1 = W_{hy}h_1 \rightarrow cross\_entropy(Softmax(y_1), x_2)\]We tranform $y_1$ into a $Softmax$ vector, and calculate cross-entropy through the second character $x_2$ (label) of the sequence. We repeat this process for all characters until we reach the end of the sequence. We add up all of their loss contributions. Finally, we do backpropagation to calculate gradients according to each trainable parameter, and modify/train these parameters by gradient descent. Then we move to the next sequence of $p$ (size of $p$ being a hyperparameter) characters, until the end of training set is reached.

To generate a new sequence of $n$ characters, we first randomly sample first character $x_1$. We combine it with initial (zero) hidden state vector value $h_0$ to derive $h_1$. We calculate the associated output vector $y_1$ and transform it into a $Softmax$ vector. Then we sample a new character $x_2$ based on softmax probability. Hence, the prediction is more of a classification task (softmax is used to ensure probability over all possible words). We continue until the required number of characters have been generated.

Long Short-Term Memory (LSTM)

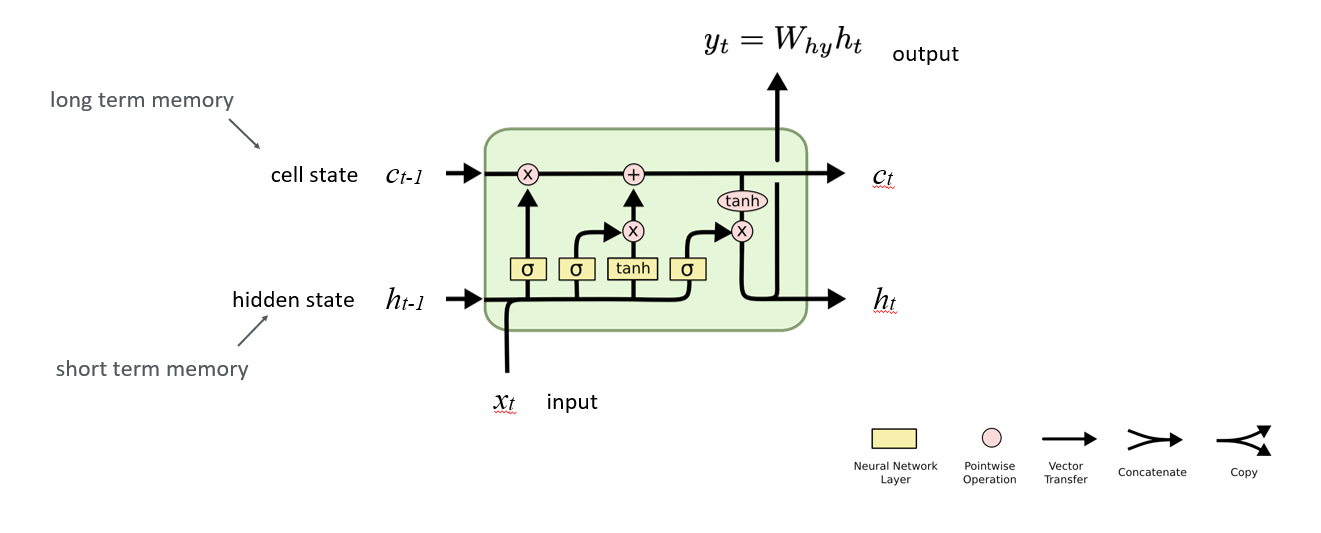

The core concept behind LSTMs is the cell state ($c_{t-l}$). Cell state directly connects to subsequent applications of the network for every element in the sequence. It provides a way to store long-term memory, and acts as a sort of a skip connection to avoid exploding and vanishing gradient problems we encountered with RNNs. There is also the forget gate that decides whether there is any information in the cell state that we no longer need. The input gate (that takes in $x_t$ and $h_{t-l}$) together with the candidate update decides what part of the input should be used to updat the cell state. Combining the forget and input gates, the LSTM updates the cell state. Finally, the output gate determines what part of the cell state should influence the next output. These can be formalised mathematically as follows:

As a note you can compare the structure of this LSTM to that of a vanilla RNN:

\[h_t = tanh(W \begin{pmatrix} h_{t-1} \\ x_t \end{pmatrix})\]Transformers

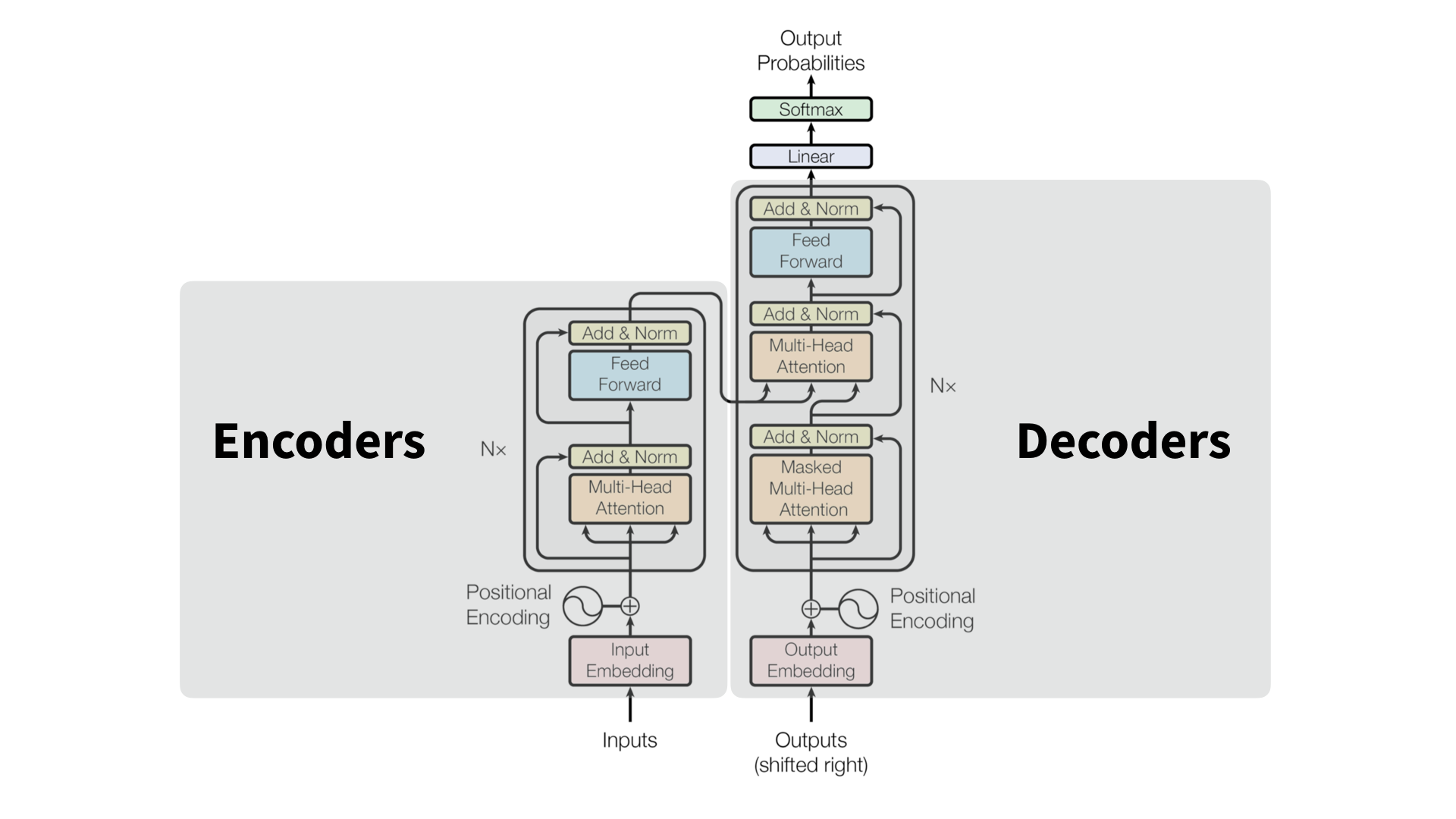

Before entering the core architecture we prepare and process the input. That is, we embed the input and apply positional encoding (so that the model can understand sequence order). The original Transformer design (Vaswani et al., 2017) consists of an encoder $E$ (reads and encodes the input sequence into a set of contextual embeddings) and a decoder $D$ (generates an output sequence attending both to its own previous outputs and to the encoder’s representations). Both encoder and decoder blocks contain repeated sublayers of multi-headed attention and feed-forward (MLP). Finally, both sublayer components are followed by residual and layer normalisation steps to ensure stabiliy and gradient flow.

\[N\times \begin{cases} E' = LayerNorm(E + MHA(E)) \\ E'' = LayerNorm(E' + FFN(E)) \\ H = Encoder(X) \end{cases}\] \[N\times \begin{cases} D' = LayerNorm(D + MHA_{masked}(D)) \\ D'' = LayerNorm(D' + MHA_{cross}(D', H)) \\ D''' = LayerNorm(D'' + FFN(D'')) \\ \end{cases}\]

The cross-attention sublayer $MHA_{cross}$ is where the decoder queries encoder’s hidden state $H$, to incorporate relevant context into predictions. After the final decoder layer, the model produces logits $Z$ for the next token prediction. Subsequently, we apply a liner transformation ($W^T_E$ often tied to the input embedding matrix). Finally, $softmax$ converts logits to a probability distribution over the vocabulary. The decoder then samples or greedily selects the next token based on these probabilities. An important note is that the final computation you perform to predict the next token (output of the decoder) is entirely the function of the last vector in the sequence.

\[logits = ZW^T_E + b\] \[P(t_{t+1} \mid t_{\leq i}) = softmax(logits)\]In the original Transformer architecture there are 6 encoders and 6 decoders. The individual encoder and decoder layers are stacked one after another. They process the information sequentially. The final encoded layer returns the encoded representation $H$. Every decoder layer gets the same $H$ output, to enable the encoder-decoder attention.

\[\cdots \rightarrow E_1 \rightarrow E_2 \cdots E_n \rightarrow H\] \[E_n \rightarrow D_1(H) \rightarrow D_2(H) \cdots D_n(H) \rightarrow \cdots\]References:

Enjoy Reading This Article?

Here are some more articles you might like to read next: