Deep Q-Learning

Q-Learning and Approximate

Q-Learning is an off-policy, model-free learning method that directly learns Q-values of states. Recall the Bellman equation for Q-value computation and the Q-Learning update formula.

\[Q^*(s, a) = \sum_{s'} T(s, a, s') \big[ R(s, a, s') + \gamma V^*(s') \big]\] \[Q_{k+1}(s, a) \leftarrow \sum_{s'}T(s, a, s') \big[R(s, a, s') + \gamma \max_{a'}Q_k(s', a') \big]\] \[sample = R(s, a, s') + \gamma \max_{a'}Q(s', a')\] \[Q(s, a) \leftarrow (1 - \alpha)Q(s, a) + \alpha \cdot sample\]Approximate Q-Learning tackles the problems with memory (saving tabular values of Q-states) by generalising the learning process through feature-based representation of states. Here each state can be represented as a feature vector.

\[Q(s, a) = w_1 \cdot f_1(s, a) + w_2 \cdot f_2(s, a) + \dots + w_n \cdot f_n(s, a) = \vec{w} \cdot \vec{f}(s, a)\] \[\vec{f}(s, a) = \big[f_1(s, a) \ f_2(s, a) \dots f_n(s, a)\big]^T\]Incorporating Deep Learning

DQL is one of the Approximate Q-Learning techniques, that uses neural networks to generalise the learning process. We approximate the Q-values using a parametrised Q-function $Q_{\theta}(s, a)$. That is, we approximate possible Q-values for each possible action at a given state. It is impossible to fully capture current environment from just one frame $x_t$. Therefore, we consider sequences of actions and observations $x_1, a_1, x_2, \cdots, a_{t-1}, x_t$ and learn game strategies that depend on these sequences. Say we trying to predict possible actions in an Atari game represented by a pixelated image $(210, 160, 3)$ with a frame of $210 \times 160$, 3 channels and each value ranging from 0 to 255 (rgb). In such scenarios we would utilise a Deep Q-Network (DQN) with a combination of convolutional and fully-connected layers. We could take an input of 4 stacked frames passed as state $s$, and output a vector of Q-values for each possible action at that state. Then like Q-Learning we use the $\epsilon$-greedy policy to select which action to take (exploration vs exploitation).

Naive DQL Algorithm

If the optimal value $Q^*(s’, a’)$ of the sequence $s’$ at the next time step was known for all possible actions $a’$, then the optimal strategy is to select the action $a’$ maximising the expected value.

\[Q^*(s, a) = \mathbb{E}_{s'} \bigg[r + \gamma \max_{a'} Q^*(s', a') \mid s, a \bigg]\]It is common to use a function approximator to estimate the action-value function, $Q(s, a, \theta) \approx Q^*(s, a)$. Typicaly this is a linear function approximator, but we can also use a non-linear neural network. We refer to a neural network function approximator with weights $\theta$ as a Q-Network. We can train a Q-Network to minimise a sequence of loss functions $L_i(\theta_i)$ that changes each iteration $i$.

\[L_i(\theta_i) = \mathbb{E}_{s, a} \bigg [(y_i - Q( s, a; \theta_i))^2 \bigg]\] \[y_i = \mathbb{E}_s' \bigg[r + \gamma \max_{a'} Q(s', a'; \theta_{i-1}) \mid s, a \bigg]\]The parameters from the previous iteration $\theta_{i-1}$ are held fixed while optimising the loss function $L_i(\theta_i)$. Note that here the target also depends on network weights, this is in contrast with the targets used for supervised learning which are fixed before the learning begins. It is often computationally efficient to optimise the loss function by stochastic gradient descent.

DQN utilises a technqiue known as experience replay where we store the agen’t experiences at each time-step $e_t = (s_t, a_t, r_t, s_{t+1})$ in a data-set $\mathcal{D} = e_1, \dots, e_N$ pooled over many episodes into a replay memory. In sampling pahse we perform actions and store the observed experience tuples in this replay memory. During the inner loop of the algorithm (training), we apply the Q-learning updates to samples of experience $e ~ \mathcal{D}$ drawn at random from the pool of stored samples. After performing experience replay, the agent selects and executes an action according to an $\epsilon$-greedy policy. We learn from this samples using the loss function (comparing the Q-value predictions and the Q-target). Subsequently, we use gradient descent to update our model parameters.

Hence, two important ingredients of the DQL algorithm are the use of a target network, and use of the experience replay. The target network with parameters $\theta^{-}$, is the same as online network except that its parameters are copied every $\tau$ steps from the online network, so that then $\theta^{-}_t = \theta_t$, and kept fixed on all other steps. The target used by DQL is then defined as:

\[Y^{DQL}_t = R_{t+1} + \gamma \max_a Q(S_{t+1}, a; \theta^-_t)\]Double Deep Q-Learning

The max operator in standard Q-learning and DQL uses the same values to both select and to evaluate an action. This makes it more likely to select overestimated values, resulting in overoptimistic value estimates. Double Q-Learning can be used to handle problems with overestimation by decoupling the action selection from the target Q-value generation. This idea behind the traditional Double Q-Learning (in tabular setting) can be generalised to work with large-scale function approximators. The target network in DQN architecture provides a natural candidate for the second value function, without having to introduce additional networks. Double DQN splits roles (1) select action $a^*$ with the online network $\theta$, (2) evaluate that action $Y^{DoubleDQN}_t$ with the target network $\theta^-$. Overall, the Double DQN update formula is the same as standard DQN , but replacing the target as follows:

\[a^* = \arg \max_a Q(S_{t+1}, a; \theta)\] \[Y^{DoubleDQN}_t = r + \gamma Q(S_{t+1}, a^*; \theta^-)\] \[Y^{DoubleDQN}_t = R_{t+1} + \gamma Q(S_{t+1}, \arg \max_a Q(S_{t+1}, a; \theta_t), \theta^-_t)\]The update to the target network stays unchanged from the standard DQL algorithm and remains a periodic copy of the online network.

Algorithm Implementation

As a demonstration we use PyTorch to train a Deep Q Learning (DQN) agent on the CartPole-v1 task from Gymnasium. Key steps involve calculating the temporal difference $\delta$ and defining a loss function (where $B$ is a batch of transitions sampled from memory replay).

\[\delta = Q(s, a) - (r + \gamma \max^{'}_{a} Q(s', a))\] \[\lambda = \frac{1}{\mid B \mid} \sum_{(s, a, s', r) \in B} \lambda(\delta)\] \[where \ \lambda(\delta) = \begin{cases} \frac{1}{2} \delta^2 \ \ for \mid \delta \mid \leq 1 \\ \mid \delta \mid - \frac{1}{2} \ \ otherwise \end{cases}\]We can define our Q-Network to output 2 values for 2 possible actions from our current state $Q(s, left)$ and $Q(s, right)$ in the CartPole problem.

class DQN(nn.Module):

def __init__(self, n_observations, n_actions):

super(DQN, self).__init__()

self.layer1 = nn.Linear(n_observations, 128)

self.layer2 = nn.Linear(128, 128)

self.layer3 = nn.Linear(128, n_actions)

def forward(self, x):

x = F.relu(self.layer1(x))

x = F.relu(self.layer2(x))

return self.layer3(x)

Similarly, we also define a class to manage the replay memory, where transition $T = (s, a, s’, r)$.

class ReplayMemory(object):

def __init__(self, capacity):

self.memory = deque([], maxlen=capacity)

def push(self, *args):

"""Save a transition"""

self.memory.append(Transition(*args))

def sample(self, batch_size):

return random.sample(self.memory, batch_size)

def __len__(self):

return len(self.memory)

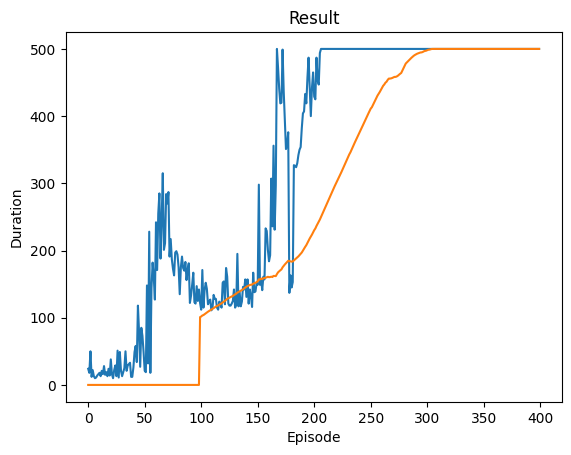

We select our actions based on the standard $\epsilon$-greedy policy. The main training loop, runs $N$ episodes. It consists of selection an action $a$, and taking that action in the environment to get $o_t, r_t$ and termination condition checks. We push the current $(s, a, s’, r)$ into the replay memory, update the state $s \leftarrow s’$ and take an optimisation step for our policy network. The inner optimisation step consists of sampling a batch $B$ from the memory, calculating the loss (with state action values from $\theta$ and expected state action values from $\theta^{-}$) and updating the network weights Subsequently, in the main loop we also update the target network weights $\theta^- \leftarrow \tau \theta + (1-\tau) \theta^{-}$. Here is an example output from the algorithm ran for $N=400$ episodes. The algorithm seems to converge to the default reward threshold (duration termination condition) of 500 steps after around 200 episodes.

Summary

- Online Q-Network $\theta$ actively learns and selects actions

- Target Q-Network $\theta^-$ is a frozen copy of online network only used to compute stable targets

- Experience Replay Buffer is used to sample transitions

References

Enjoy Reading This Article?

Here are some more articles you might like to read next: